While most mainstream AI tools include strict safety layers and moderation protocols, many power users prefer systems with fewer restrictions — especially when the goal is privacy, experimentation, and full control over models and settings. In 2026, “unmoderated” often doesn’t mean “no rules” — it usually means you choose the model, you choose the deployment, and you control how guardrails (if any) are implemented. These minimally moderated and self-hosted AI tools are popular with developers, researchers, writers, and roleplayers who want transparent behavior, configurable prompts, adjustable memory, and the ability to run models locally or on private infrastructure. They’re also valuable for sensitive workflows, since offline or self-hosted setups can reduce data exposure compared to fully cloud-based assistants. However, with greater freedom comes greater responsibility: users still need to respect platform terms, follow local laws, and use AI ethically. This page ranks the top unmoderated or lightly moderated AI tools of 2026 based on autonomy, flexibility, ease of setup, and practical performance. Whether you’re building a private assistant, testing open models, or creating a custom chat experience on your own hardware, the tools below provide a strong foundation for “AI on your terms.”

Top Paid Unmoderated AI Tools

| Rank | Tool | Approach | Price | Notes |

|---|---|---|---|---|

| #1 | OpenRouter | Model marketplace + single API | Pay-as-you-go | Policies vary by model/provider |

| #2 | Runpod | Cloud GPUs to run your own stack | Usage-based | Full deployment control with private pods |

| #3 | Hugging Face Inference Endpoints | Deploy open models on dedicated infra | Hourly usage-based | Great for production + custom checkpoints |

| #4 | Lambda Cloud | On-demand GPU instances & clusters | Usage-based | Ideal for heavy models and fine-tuning |

| #5 | Vast.ai | GPU marketplace for custom deployments | Usage-based | Flexible setups; pick hardware by budget |

OpenRouter

OpenRouter is one of the easiest ways to access a wide range of language models through a single API, making it a strong choice for developers who want flexibility without managing multiple vendor integrations. Instead of locking you into one assistant experience, OpenRouter lets you choose the model that fits your workflow — whether that’s a lightweight model for quick drafts, a reasoning-focused model for complex problem solving, or an open-weights option for experimentation. It’s best described as “control through choice”: you decide which models to use and how your app behaves, while staying on a pay-as-you-go pricing model that scales with usage. Keep in mind that moderation and usage policies can differ by model and provider, so it’s a great platform for flexibility — but not a guarantee of “no restrictions.” If your goal is building a customizable AI product, testing multiple models quickly, or powering a personal interface like a private chat client, OpenRouter is the most convenient place to start.

Runpod

Runpod is a top-tier option for running open models with maximum autonomy, because it gives you direct access to cloud GPUs without forcing you into a single “assistant” wrapper. You can deploy your own preferred stack — like Ollama, Open WebUI, text-generation-webui, or a custom API — and keep everything configured exactly how you want, including prompts, memory, system rules, and model selection. This makes Runpod ideal for advanced users building private assistants, roleplay experiences, research prototypes, or internal tools where you want to control the environment and minimize third-party interference. Since you’re essentially renting compute, costs depend on hardware and runtime, but the upside is unmatched flexibility. If you want the freedom of self-hosting without needing to buy a GPU, Runpod is one of the best paid “unmoderated” paths in 2026.

Hugging Face Inference Endpoints

Hugging Face Inference Endpoints is a powerful way to deploy open-source models (or your own fine-tuned checkpoints) onto dedicated, scalable infrastructure. Instead of relying on a consumer chat UI, you get an endpoint you can integrate into your own apps and workflows — meaning you control the user experience, prompts, and downstream behavior. This is especially valuable for teams building production tools, research systems, or private company assistants where you want predictable uptime and a clean deployment story. Inference Endpoints can be used for straightforward “chat with a model” setups, but it shines when you want to operationalize a specific model (or version) and keep your environment consistent. For users who want freedom through engineering control — rather than a single platform’s chat rules — Hugging Face is one of the most practical paid options available.

Lambda Cloud

Lambda Cloud is built for serious AI workloads: think larger models, faster inference, fine-tuning, and scalable experiments that would be difficult to run on consumer hardware. If your “minimal filters” goal is really about running your own models and configurations at high performance, Lambda is a strong pick because it provides high-end GPU infrastructure and cluster options that can handle demanding setups. Power users often choose Lambda when they want to host their own private AI services, run multi-GPU inference, or fine-tune open weights for specific domains (like tutoring, research assistance, or long-form writing). Since Lambda is infrastructure-first, you keep control over what you deploy and how you deploy it — but you’re also responsible for security, access, and ethical use. If you’re comfortable running your own stack, Lambda offers the horsepower to make “AI on your terms” feel fast and reliable.

Vast.ai

Vast.ai is a GPU marketplace that appeals to users who want flexibility and cost control when running custom AI deployments. Instead of choosing from a small set of fixed cloud instances, you can browse available machines and select hardware that fits your performance needs and budget — then install your preferred tooling on top. This makes Vast.ai a popular option for experimentation, prototyping, and “bring-your-own-model” workflows where you want to run open weights with your own prompt rules and settings. It’s especially useful when you’re testing multiple model sizes or trying different inference backends and want the freedom to scale up (or down) without buying hardware. As with any infrastructure platform, the key benefit is control — but it also means you should take security seriously and keep any deployments private unless you know exactly how to protect them.

Top Free Unmoderated AI Tools

| Rank | Tool | Approach | Moderation Level | Notes |

|---|---|---|---|---|

| #1 | Ollama | Local model runner + simple API | User controlled | Fast setup for running open models locally |

| #2 | Open WebUI | Self-hosted web interface for local/cloud models | User controlled | Great “ChatGPT-style” UI for your own models |

| #3 | AnythingLLM | Local/private “chat with documents” workspace | User controlled | Strong for private RAG and study/research vaults |

| #4 | GPT4All | Offline desktop app for local LLMs | User controlled | Simple, private, beginner-friendly local setup |

| #5 | text-generation-webui | Advanced local Web UI with multiple backends | User controlled | Highly configurable; popular with power users |

Ollama

Ollama is one of the most popular ways to run open models locally in 2026 because it’s fast to install, straightforward to use, and works well as the foundation for a private AI workflow. Instead of relying on a public chatbot with strict filters, Ollama runs models on your own machine and exposes a simple local API — meaning you can connect it to tools like Open WebUI, AnythingLLM, or your own custom app. This is “minimal moderation” in the most practical sense: you’re not negotiating with a third-party UI, and your prompts and outputs stay on your device unless you route them elsewhere. It’s a great choice for drafting, coding help, brainstorming, and experimenting with different model families, especially if you want a clean, reliable local setup without heavy configuration.

Open WebUI

Open WebUI gives you a polished, self-hosted chat interface that feels familiar to anyone who has used mainstream AI assistants — except it’s built to run “on your terms.” You can connect it to local runners like Ollama or to OpenAI-compatible APIs, then customize system prompts, workflows, and model settings to match your preferences. For many users, Open WebUI is the missing piece that turns local models into a daily-driver tool: conversations, organization, and a clean UI without surrendering control to a single vendor’s moderation layer. It’s especially useful for people who want a private assistant feel, plus the flexibility to switch models based on tasks (short answers vs deep reasoning vs creative writing). If you want the convenience of a modern AI chat app while keeping deployment control, Open WebUI is one of the best free options available.

AnythingLLM

AnythingLLM is a strong pick for users who want “unmoderated” flexibility for real work — especially research, studying, and private knowledge management. It’s designed to help you chat with your own documents and notes (often called RAG: retrieval-augmented generation), while letting you choose the model and deployment style that fits your privacy needs. You can run it locally for a fully private workflow or connect it to external APIs if you prefer. This is ideal for students, professionals, and creators who don’t want to paste sensitive material into public chat tools, and who want more control over how information is stored and searched. If your version of “minimal filters” is really “maximum privacy + control over my knowledge base,” AnythingLLM is one of the best free platforms to build on.

GPT4All

GPT4All is a user-friendly desktop application that makes local AI accessible, even if you’re not a developer. Instead of setting up containers or managing command-line tools, you can install the app, download compatible models, and start chatting — all while keeping your work on-device. That local-first approach is exactly why GPT4All appears on “unmoderated” lists: you control the environment, you control the model, and you aren’t dependent on an external chatbot’s content rules to use the tool. It’s especially good for private writing, brainstorming, offline study support, and experimentation with open models on everyday hardware. If you want a simple local assistant experience without building a full stack, GPT4All is a great place to start.

text-generation-webui

text-generation-webui (often associated with the Oobabooga project) is one of the most flexible local AI interfaces available, built for power users who want deep control over model loading, backends, parameters, and advanced features. It supports multiple inference approaches (like llama.cpp and Transformers-style backends), offers rich configuration options, and can be run fully offline. This is the tool you pick when you want to tune generation behavior, test different model formats, adjust performance settings, and experiment with UI extensions — all without relying on a commercial assistant’s opinionated guardrails. It has a steeper learning curve than beginner apps, but the payoff is maximum control. For advanced users building a custom, lightly restricted local experience, text-generation-webui remains one of the best free “do anything” platforms in 2026.

Rankings

Chatbots

AI chatbots have quickly evolved from simple assistants into powerful, multi-purpose tools used by millions of people every day...

Image Generators

AI image generators are revolutionizing the way creatives, marketers, and developers produce visual content by transforming text prompts into detailed, customized...

Writing Assistants

AI writing assistants have become indispensable tools for anyone who writes — from students and bloggers to business professionals and marketers...

Deepfake Detection

As deepfake technology becomes more advanced and accessible, detecting AI-manipulated content is now a critical challenge across journalism, education, law, and...

Productivity & Calendar

AI productivity and calendar tools have become essential for professionals, entrepreneurs, and students looking to make the most of their time without getting overwhelmed...

Natural Language To Code

Natural language to code tools are transforming software development by enabling users to build apps, websites, and workflows without needing advanced programming...

Blog

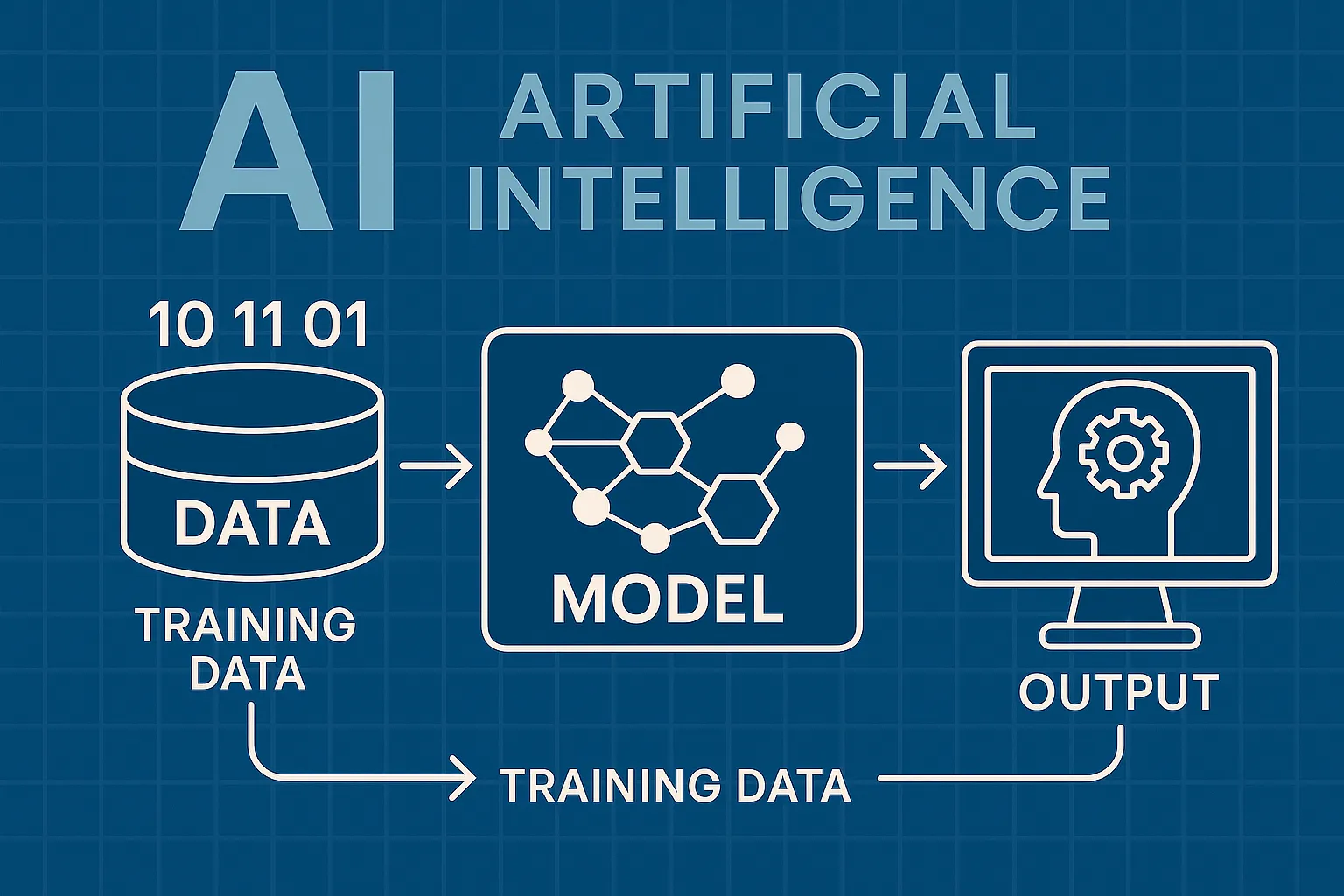

How AI Actually Works

Understand the basics of how AI systems learn, make decisions, and power tools like chatbots, image generators, and virtual assistants.

What Is Vibe Coding?

Discover the rise of vibe coding — an intuitive, aesthetic-first approach to building websites and digital experiences with help from AI tools.

7 Common Myths About AI

Think AI is conscious, infallible, or coming for every job? This post debunks the most widespread misconceptions about artificial intelligence today.

The Future of AI

From generative agents to real-world robotics, discover how AI might reshape society, creativity, and communication in the years ahead.

How AI Is Changing the Job Market

Will AI replace your job — or create new ones? Explore which careers are evolving, vanishing, or emerging in the AI-driven economy.

Common Issues with AI

Hallucinations, bias, privacy risks — learn about the most pressing problems in current AI systems and what causes them.