As the AI ecosystem rapidly evolves, a new category of tools has emerged: AI for AI. These platforms are specifically designed to enhance, optimize, or automate interactions between different AI systems—whether it’s generating better prompts, refining model outputs, chaining multiple AI tasks, or integrating language models into workflows. This page highlights the top tools that help you get more out of the AI systems you already use, comparing both free and paid options that boost efficiency, scalability, and precision. Whether you're a developer building advanced workflows, a researcher testing model outputs, or a power user looking to automate prompt chains, these tools offer a smarter way to interact with and extend AI capabilities. We’ve ranked each tool based on innovation, ease of use, compatibility, and feature set. If you're looking to supercharge your AI stack in 2026, this guide covers the best tools designed to work alongside or on top of today’s leading AI models.

Top Paid AI Tools for Creating AI

| Rank | Tool | Focus | Price | Best For |

|---|---|---|---|---|

| #1 | OpenAI API Platform | Frontier models + tool/agent building | Pay-as-you-go | Agents, apps, production workflows |

| #2 | Anthropic Claude API | High-quality LLMs + safety-first deployments | Usage-based pricing | Reliable assistants, analysis, tool use |

| #3 | Google Vertex AI | GenAI + ML platform + agent tooling | Usage-based pricing | Enterprise ML, Gemini integration, MLOps |

| #4 | Amazon Bedrock | Managed foundation models + guardrails | Usage-based pricing | AWS-native genAI, security, governance |

| #5 | LangSmith | LLM observability + evals + agent debugging | Free tier + from $39/seat/month | Shipping and monitoring reliable agents |

OpenAI API Platform

The OpenAI API Platform is one of the most flexible “build-on-top” options for teams creating AI systems in 2026, especially when you want strong model quality, broad multimodal capability, and a mature developer ecosystem. Instead of training from scratch, you can compose real applications by combining model calls with tool execution, structured outputs, retrieval, and safety controls. That makes it ideal for agent-style products (multi-step workflows that plan, call tools, and verify results), internal copilots, customer support automation, and specialized assistants that need consistent behavior at scale. The platform is also practical for rapid iteration: you can prototype quickly, then harden the same workflow for production by adding guardrails, evaluation suites, and monitoring. Pricing is pay-as-you-go, which works well for indie developers and for enterprises that want to scale usage without renegotiating contracts every time their app grows.

Anthropic Claude API

Anthropic’s Claude API is a top choice for builders who prioritize dependable outputs, strong instruction-following, and safety-oriented production use. In practice, Claude is widely used for systems that need careful reasoning, long-context document work, and tool-augmented assistants that must behave predictably in real business workflows. Claude’s developer platform is designed around practical agent building: you can connect external tools, implement structured response formats, and build multi-step pipelines where the model plans and executes actions across different services. For teams doing “AI for AI,” Claude is especially useful as a judge model for evaluations, a reviewer for prompt iterations, or a high-precision backbone model for complex automations. Pricing is usage-based, so it can fit everything from small prototypes to large deployments, and it’s commonly paired with observability/eval tools to keep performance consistent as your prompts and product evolve.

Google Vertex AI

Vertex AI is Google Cloud’s unified platform for building, deploying, and operating AI systems—covering classic ML pipelines and modern generative AI in the same environment. It’s a strong fit when you want a single “control plane” for datasets, training jobs, managed endpoints, evaluations, and governance, while also integrating foundation models (including Gemini) for text, vision, and multimodal workflows. Vertex AI is particularly compelling for enterprise teams already on Google Cloud because it reduces glue work: you can connect data sources, create reproducible pipelines, and deploy scalable inference endpoints with familiar cloud-native security and IAM patterns. For AI-for-AI use cases, Vertex AI supports agent development and testing workflows, model monitoring, and evaluation loops that help teams measure quality changes over time as prompts, tools, and retrieval strategies evolve. Pricing is usage-based and typically maps cleanly to real production consumption.

Amazon Bedrock

Amazon Bedrock is AWS’s managed foundation-model platform for organizations that want to build production generative AI systems inside the AWS ecosystem with enterprise-grade controls. Bedrock is designed for teams that care about governance, compliance, and predictable operations: it offers a structured way to access and manage multiple model families while keeping security, permissions, logging, and network configuration consistent with other AWS services. In practical “AI for AI” stacks, Bedrock is often used to power agent backends, retrieval pipelines, and internal copilots that integrate with AWS-native data stores and infrastructure. It’s a solid choice when your company already standardizes on AWS tooling and wants to minimize custom platform work while still deploying real AI applications. Pricing is usage-based, which makes it straightforward to start small and scale as your app’s token volume grows.

LangSmith

LangSmith has become one of the most widely used “AI-for-AI” layers for teams shipping LLM apps and agents, because it focuses on the hard part most builders run into: knowing what actually happened inside a multi-step workflow and improving it without guessing. LangSmith gives you tracing (so you can see each tool call, intermediate step, retrieval result, and prompt), evaluation workflows (so you can score outputs across datasets and catch regressions), and monitoring/alerting (so you can detect failures in production before users complain). This is exactly what you want when you’re chaining models together, experimenting with different prompts, or running agentic flows that can fail in subtle ways. It works well for solo developers who want fast debugging and for teams that need shared visibility across a production agent stack. It offers a free tier, with paid plans starting around $39/seat/month plus usage-based components depending on volume.

Top Free AI Tools for Creating AI

| Rank | Tool | Focus | Limitations | Ideal Use |

|---|---|---|---|---|

| #1 | LangChain | LLM apps, tools, and RAG pipelines | Developer setup required | Building production LLM workflows |

| #2 | LlamaIndex (OSS) | Data orchestration for RAG + agents | Tuning and architecture choices on you | Document-heavy agent systems |

| #3 | DSPy | Optimizing prompts/programs for LMs | More “engineering” than drag-and-drop | Systematic prompt optimization |

| #4 | Microsoft AutoGen | Multi-agent workflows and coordination | Fast-moving ecosystem and patterns | Agent research and prototypes |

| #5 | Phoenix (OSS) | LLM tracing + evaluation + debugging | Self-hosting for full control | Evals and observability on a budget |

LangChain

LangChain remains one of the most practical open-source foundations for “AI for AI” systems because it focuses on composition: chaining model calls with retrieval, tools, memory, routing logic, and structured outputs to create multi-step workflows that behave like real applications. It’s commonly used for retrieval-augmented generation (RAG), agent-style assistants that can call external APIs, and orchestration layers that combine multiple models or strategies depending on the task. In 2026, the value of LangChain is less about “hello world prompts” and more about building repeatable patterns: reliable tool calling, retry strategies, output validation, and modular components that let you swap models/providers without rewriting your whole app. The trade-off is that LangChain is a developer tool, not a finished product—so you’ll still need to decide architecture and write code to integrate it with your stack. If you’re building a serious AI workflow and want a flexible, widely supported ecosystem, it’s still a top free option.

LlamaIndex (OSS)

LlamaIndex is an open-source framework designed to solve one of the biggest real-world bottlenecks in LLM systems: connecting models to your data in a way that stays accurate, scalable, and maintainable. It provides building blocks for ingestion, indexing, retrieval, routing, and evaluation—so you can create document-aware agents and RAG pipelines that work with PDFs, knowledge bases, internal wikis, product docs, and more. For “AI for AI,” this is extremely useful because it turns your data layer into something agent-friendly: you can build workflows that retrieve, cite, summarize, extract structured fields, or answer questions using your sources rather than guessing. The main limitation is that you still have to make smart design choices (chunking strategy, retriever type, embedding model, reranking, grounding rules), and those choices strongly affect quality. If your AI system lives or dies on document accuracy, LlamaIndex is one of the best free toolkits to start with.

DSPy

DSPy stands out because it treats LLM workflows more like software you can engineer and optimize—rather than a pile of fragile prompt strings. Instead of manually tweaking prompts forever, DSPy lets you define modular “programs” and then uses optimizers to improve prompt/behavior based on examples and objective functions. That’s a big deal for AI-for-AI systems where you need repeatability: if your agent’s performance changes after a model update, or if your prompt grows messy as features are added, DSPy gives you a more systematic way to iterate and measure improvements. It’s especially useful for building robust classifiers, structured extraction pipelines, RAG systems, and agent loops where the “right” prompt isn’t obvious and you want a methodical tuning process. The downside is that DSPy feels more like engineering than no-code—so it’s best for developers who are comfortable writing tests, curating datasets, and evaluating outputs over time.

Microsoft AutoGen

Microsoft AutoGen is an open-source framework focused on agentic systems—especially multi-agent workflows where specialized agents collaborate, critique, and refine outputs to solve harder tasks than a single model call can handle. This is a common pattern in AI-for-AI stacks: one agent plans, another executes, another verifies, and another formats outputs into a final deliverable. AutoGen provides tools and abstractions that make these coordination patterns easier to prototype, including structured conversations between agents, tool use, and iterative improvement loops. It’s particularly useful if you’re experimenting with how agents should coordinate (delegation, reflection, debate, self-correction) or building research-style prototypes before committing to a production architecture. The key limitation is that agent frameworks evolve quickly, and best practices shift as model capabilities change—so you should expect to iterate and potentially refactor. Still, for free agent experimentation and advanced coordination logic, AutoGen is a strong option.

Phoenix (OSS)

Phoenix is an open-source observability and evaluation platform built for AI applications, making it a great free choice when you need visibility into what your system is doing—without immediately committing to a paid LLMOps stack. It helps you trace runs end-to-end (prompts, tool calls, retrieval results, intermediate steps), analyze where errors happen, and evaluate outputs using repeatable tests so you can track quality over time. That’s exactly what “AI for AI” needs: as soon as you chain more than one step together, debugging becomes less about a single prompt and more about understanding a workflow. Phoenix supports experimentation and troubleshooting loops that let you compare changes, spot regressions, and improve reliability before you ship updates. The main trade-off is operational: to get full control and scale, you’ll likely self-host and integrate it with your environment. If you want serious evals and tracing on a budget, Phoenix is one of the best free tools available.

Rankings

Chatbots

AI chatbots have quickly evolved from simple assistants into powerful, multi-purpose tools used by millions of people every day...

Image Generators

AI image generators are revolutionizing the way creatives, marketers, and developers produce visual content by transforming text prompts into detailed, customized...

Writing Assistants

AI writing assistants have become indispensable tools for anyone who writes — from students and bloggers to business professionals and marketers...

Deepfake Detection

As deepfake technology becomes more advanced and accessible, detecting AI-manipulated content is now a critical challenge across journalism, education, law, and...

Productivity & Calendar

AI productivity and calendar tools have become essential for professionals, entrepreneurs, and students looking to make the most of their time without getting overwhelmed...

Natural Language To Code

Natural language to code tools are transforming software development by enabling users to build apps, websites, and workflows without needing advanced programming...

Blog

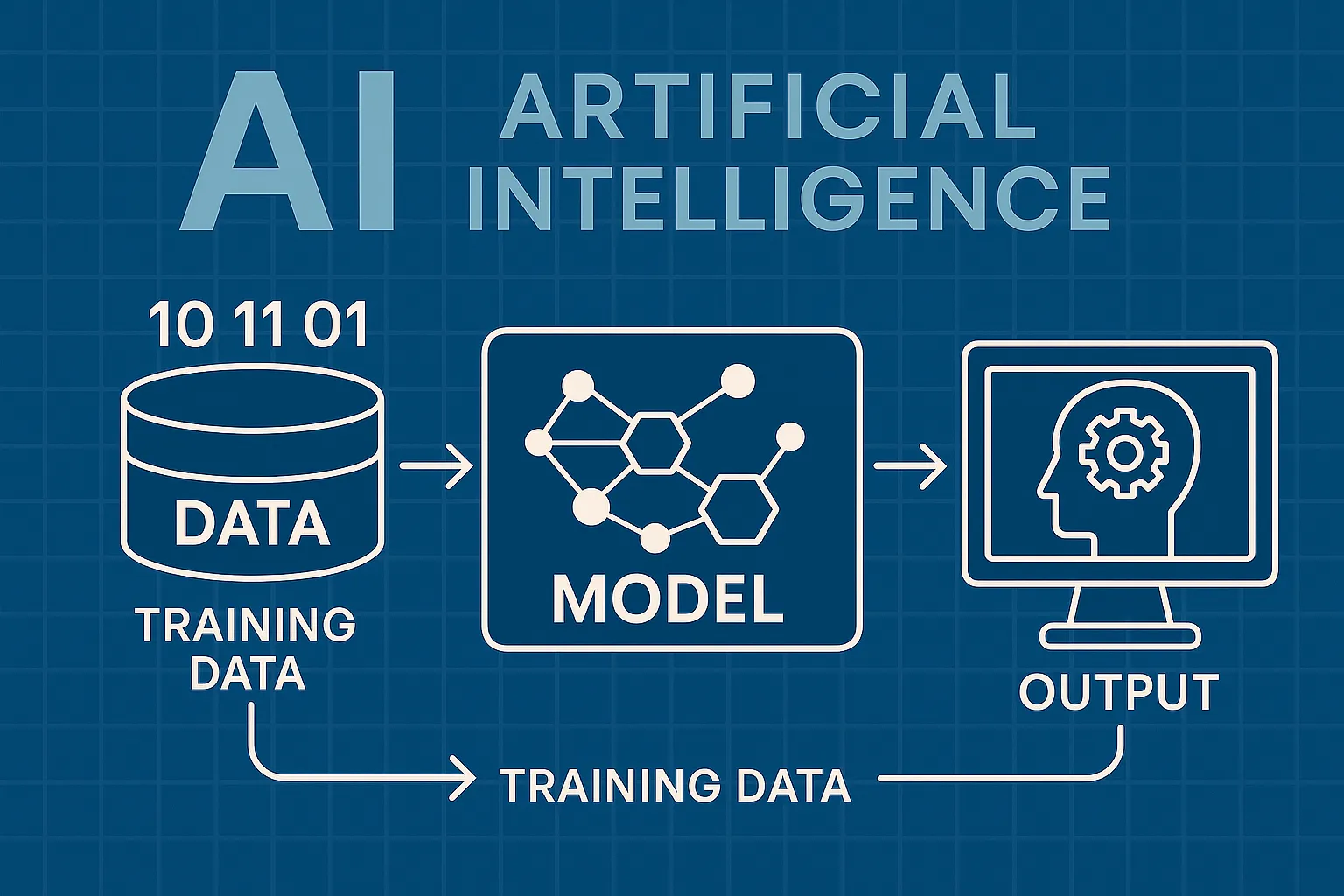

How AI Actually Works

Understand the basics of how AI systems learn, make decisions, and power tools like chatbots, image generators, and virtual assistants.

What Is Vibe Coding?

Discover the rise of vibe coding — an intuitive, aesthetic-first approach to building websites and digital experiences with help from AI tools.

7 Common Myths About AI

Think AI is conscious, infallible, or coming for every job? This post debunks the most widespread misconceptions about artificial intelligence today.

The Future of AI

From generative agents to real-world robotics, discover how AI might reshape society, creativity, and communication in the years ahead.

How AI Is Changing the Job Market

Will AI replace your job — or create new ones? Explore which careers are evolving, vanishing, or emerging in the AI-driven economy.

Common Issues with AI

Hallucinations, bias, privacy risks — learn about the most pressing problems in current AI systems and what causes them.